Artificial intelligence (AI) has transitioned from hype to defining strategic capability across private markets. Over the past year, we have spoken with several market leaders, and the consensus is clear: Private markets are entering the “reality phase” of AI adoption. The winners will be those who pair high quality data foundations with workflow specific solutions that deliver measurable business outcomes.

For limited partners (LPs), AI presents a transformative opportunity to strengthen oversight, deepen due diligence, and recognize risks or opportunities well before traditional reporting cycles reveal them. However, the path from AI hype to tangible results is littered with failed pilots and unfulfilled promises. Deriving real value requires more than deploying tools—it starts with access to high-quality, well-structured data, which serves as the foundation for every meaningful AI application. Beyond data readiness, success demands governance discipline and tight alignment with investment workflows.

AI raises a broad set of questions for LPs—from hidden AI-related risks and opportunities within private portfolios, to evolving GP practices and shifting competitive dynamics across asset classes. Each deserves careful, standalone analysis. This paper focuses on providing a practical roadmap for LPs to implement AI. Whether you have a dedicated data team or a lean investment staff managing operations alongside diligence, the principles we outline scale to your context. Informed by market intelligence and our own deployment experience, this paper focuses on how to avoid the common pitfalls that trap most organizations in “pilot purgatory.” The message is simple: LPs that embrace AI responsibly and pragmatically will gain an analytical and operational edge in an increasingly complex private markets ecosystem.

Understanding the market context

Before implementing AI, LPs need to understand the current landscape—not to predict the future, but to make informed decisions about where to invest resources.

The executive–employee perception gap

Industry research reveals that approximately 80% of executives believe their firms are successfully adopting AI, while employees report implementation frustration and “AI fatigue.” With time, this gap should narrow. The firms that improve AI literacy and invest in upskilling, usage, and sophistication will be better positioned to capture AI’s long-term value.

Where value is created

Organizations no longer gain a competitive advantage from the models themselves. Instead, differentiation comes from how effectively you integrate AI into your existing workflows, combine it with proprietary data, and embed it into daily processes. For LPs, this means focusing on workflow integration and real business problems—not on which AI model to use.

The mediocre solution trap

Despite rapid adoption, many firms get stuck in pilot purgatory, making it nearly impossible to demonstrate a concrete ROI. Most vendor AI solutions look impressive in demos but fall short in production, delivering 50–70% effectiveness when users demand >90% reliability. That 20-point gap is often the difference between adoption and abandonment.

Reasons include:

- Poor data hygiene and fragmented sources;

- Vendor tools that work in demos but fail in real workflows;

- Lack of integration into business processes; and

- Misalignment between executive expectations and operational realities.

For LPs, this gap is critical. Vendor claims should be evaluated through the lens of data quality, workflow fit, and actual deployment evidence—not slideware.

Core AI use cases for LPs

Drawing from both market signals and our internal roadmap, LPs can benefit from AI across five main areas:

1. Data extraction and standardization

LPs can use AI to systematically collect and standardize data from unstructured quarterly reports, financial statements, capital account statements, fund memos, and legal agreements, thereby reducing manual effort while improving data accuracy, timeliness, and analytical insight across portfolio monitoring and due diligence workflows. That this is one of the most vendor-saturated areas in the LP technology stack makes the evaluation discipline discussed earlier especially important.

How LPs can implement:

- Identify high volume, manual tasks (e.g., updating capital account balances).

- Train parsing models on specific document types.

- Implement validation rules to catch errors before any data enters your system.

- Pilot with a single fund or GP to prove the model, then scale.

2. Automated fund monitoring

By analyzing large volumes of GP reporting to flag unusual valuation movements, performance anomalies, fee inconsistencies, operating metric deviations, or strategy drift, LPs can transform their monitoring from reactive to predictive.

How LPs can implement:

- Start by extracting quantitative metrics from quarterly reports.

- Define anomaly detection rules specific to the portfolio (e.g., valuation increases exceeding peer benchmarks by >20%).

- Create dashboards to surface exceptions that need attention.

- Integrate outputs with existing investment monitoring workflow.

3. Market intelligence and knowledge management

Aggregate intelligence from news flows, published economic or industry research, earnings calls, GP reports, meeting notes, and internal memos to enable natural language querying and faster insight discovery.

How LPs can implement:

- Ingest documents (e.g., investment memos) with strong metadata.

- Focus on specific, high-value queries instead of trying to answer every question.

- Set realistic expectations. For instance, chatbots work best with well-structured knowledge base (e.g., documents with metadata).

- Build iteratively: Start with a single document type or GP relationship, then broaden the aperture.

- Integrate into due diligence workflows. For example, autogenerate premeeting briefs that consolidate past discussions, recent quarterly updates, and relevant news.

4. Meeting intelligence and notetaking

LPs spend significant time in IC meetings, GP diligence calls, annual meetings, and portfolio reviews, yet critical qualitative insights may be lost or captured inconsistently.

AI notetaking can record discussions, generate structured summaries, extract decisions and action items, and link insights to funds or GPs—strengthening institutional memory and continuity across long investment cycles. Because meeting content is highly sensitive, this use case demands robust security and governance.

How LPs can implement:

- Establish clear policies on consent, recording, and disclosure.

- Require enterprise controls: encryption, approved data residency, defined retention, and exclusion from model training.

- Generate structured outputs (decisions, risks, follow ups), not just transcripts.

- Apply role-based access, especially for IC and diligence discussions.

- Integrate notes directly into IC prep, diligence, and monitoring workflows.

- Maintain human review for accuracy and trust.

Reporting and stakeholder communication

Generate draft narratives for internal reports, tailored by asset class. This reduces operational load and enhances transparency for investment committees and other stakeholders.

How LPs can implement:

- Identify repetitive reporting tasks for which the structure is consistent but the data changes (e.g., fund performance summaries).

- Build templates that reflect your institutional voice and compliance needs.

- Always include human review: AI drafts; humans refine and approve.

Implementation roadmap for LPs

Successful AI implementation requires a problem-first approach. Avoid the “tool looking for a problem” trap. Instead, identify concrete friction points, then seek AI-augmented solutions aligned with strategy, data, people, and governance.

Phase 1: Strategy & readiness

Identify high friction workflows where AI accelerates decision making.

What to do:

- Survey your team to identify repetitive, low-value tasks.

- Quantify the pain: How many hours per month? How many people are affected? What is the error rate?

- Prioritize workflows where AI can accelerate decision making or eliminate bottlenecks, not just reduce headcount.

- Assess your data foundation: Do you have the data quality and completeness needed? Garbage in equals hallucinations out.

Success criterion: A ranked list of 3–5 workflows that can deliver measurable value within six months.

Phase 2: Build vs. buy?

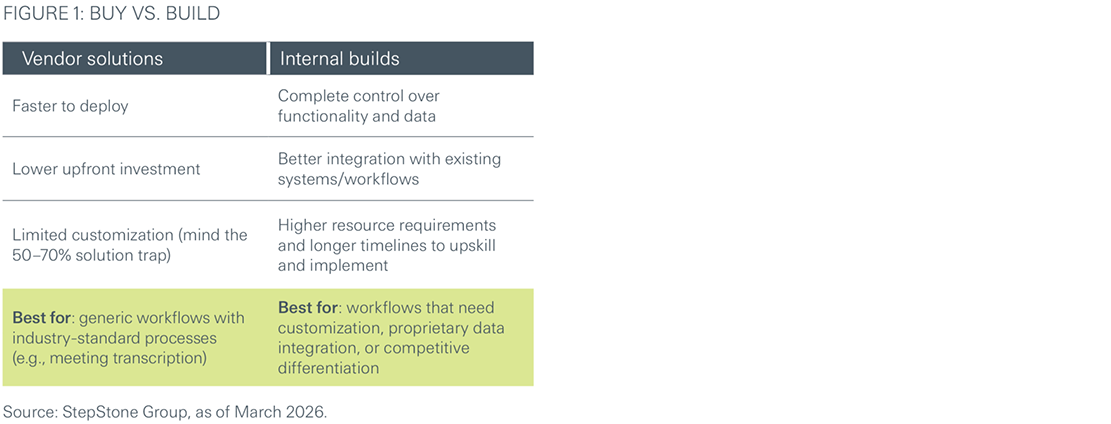

LPs must balance between third-party solutions, a proprietary one, or a combination of the two (Figure 1).

Because leading AI models now offer broadly comparable performance across many workflows, differentiation increasingly comes from the agents, system prompts, and specialized “orchestration” layers built on top—rather than from the underlying model itself.

Many larger firms (ourselves included) use a “hub and spoke” model whereby a central AI team sets the strategy, infrastructure, and governance, while business-unit champions drive execution. Smaller LP teams can achieve the same result by designating an internal champion who owns the AI roadmap while collaborating closely with end users. The core principle is the same: The people who own the workflows should shape the AI solutions.

Regardless of the approach, LPs should be cautious about long-term vendor contracts. The AI market is evolving rapidly. Increasingly, foundational model providers (like Anthropic and OpenAI) are shipping their own agents, coding tools, and plugins that may replace capabilities currently offered by third-party vendors. In particular, the industry has recently seen a step change in functionality; having access to the latest models, agents, and plugins provides a material edge. Keeping contracts short and flexible preserves optionality and ensures LPs do not get locked into yesterday’s technology.

Phase 3: Integrate, prove value, then scale

AI only delivers value when embedded within actual processes (e.g., IC prep). Training, change management, and workflow redesign often matter more than the technology itself.

Best practices:

- Pick one high-friction workflow (not five). Focus on a quick win that can demonstrate value within six months.

- Deploy to a small pilot group—even a single power user on a small team, or 5–10 users at a larger organization. Gather feedback, iterate rapidly, and address pain points before broader rollout.

- Measure concrete outcomes like time saved or errors reduced. Vague claims about “efficiency gains” won’t drive adoption.

- Highlight success internally. Peer-to-peer adoption is far more effective than top-down mandates. Testimonials from coworkers can go a long way.

- Scale broadly. Once you have proven the >90%effectiveness threshold, roll out to the full organization.

- Address implementation fatigue. Remember the 80/20 perception gap? Employees get burned out on AI when use cases are unclear or training is inadequate. Provide clear guidelines, adequate upskilling time, and dedicated support during rollout. We have found live, hands-on tutorials—where team members work through real workflows with AI tools—to be much more effective than passive training materials.

- Manage the human element. Position AI as augmentation, not replacement. This framing from Harvard Business School—“AI won’t replace humans, but humans with AI will replace humans without AI”—shifts the culture from fear to motivation.

- Establish feedback loops. Conduct periodic post-mortems on AI outputs that missed the mark. Understanding why a model produced an incorrect extraction or an erroneous red flag is critical to improving accuracy. Treat failures as learning opportunities, not reasons to abandon the tool.

Phase 4: Governance and risk controls

As outlined in our paper on Responsible AI, adequate controls can help ensure that AI protects value and is accretive to portfolios. Clear documentation around model usage and limitations; data governance and lineage controls; and vendor/GP accountability are just a few ways it can manifest. LPs should also ensure clarity on data ownership, particularly when using third-party vendors. Understand where your data is stored, who can access it, and whether model output or training data create intellectual property considerations. AI notetaking tools deserve particular scrutiny given the sensitivity of discussions between GPs and LPs. AI is only as dependable as the governance surrounding it.

Common pitfalls and how to avoid them

- Pilot purgatory—Endless pilots without production deployment. Set clear timelines and kill underperforming initiatives.

- Believing vendor demos—Demand proof of real-world deployment.

- Ignoring data quality—AI amplifies bad data. Treat data hygiene as a continuous discipline, not a one-time fix. AI will continually expose weaknesses in your data structure, requiring ongoing investment.

- Top-down mandates—Adoption requires user buy-in.

- Underestimating change management—Budget time and resources beyond the technology.

- Long-term contracts—A step change in AI functionality as of late 2025 means the latest models and agents offer a material edge. Keep contracts short and flexible to preserve optionality.

Conclusion

AI presents LPs with a powerful opportunity to elevate oversight, improve decision making, streamline operations, and influence GP behavior. But success requires data readiness, governance discipline, workflow integration, and a problem-first mindset.

LPs can set themselves up for success by:

- Focusing on data quality and workflow integration, not flashy AI features;

- Solving real business problems, not running perpetual pilots;

- Notching a few quick wins before attempting enterprise-wide transformation;

- Investing in governance and change management; and

- Recognizing that AI augments, rather than replaces humans.

The private markets landscape is shifting toward faster decision cycles, richer data, and higher stakeholder expectations. LPs that embrace AI thoughtfully and responsibly will be better positioned to deliver long-term value and strategic leadership. The question is no longer whether to adopt AI, but how quickly and effectively LPs can move from hype to production.

We are actively implementing these approaches in our own work and are happy to share what we have learned—from vendor evaluation and build-versus-buy decisions to hands-on training that accelerate adoption. If this paper raises questions about your own AI journey, we welcome the opportunity to connect.